For years, the technology industry has operated under the shadow of a single, green-tinted giant. NVIDIA, through a combination of visionary leadership and the early realization that GPUs were the secret sauce for parallel processing, effectively “owned” the AI market before most of us even knew there was an AI market to own. But as any long-term observer of this industry knows, dominance often breeds a certain kind of deafness. When a company stops listening to its customers because it believes its product is the only game in town, it creates a massive opening for a disciplined, focused competitor.

That competitor is AMD, and their recent performance in the MLPerf Inference 6.0 benchmarks suggests that the window of NVIDIA’s absolute dominance is closing much faster than the market originally anticipated.

The Critical Importance of MLPerf

In the world of technology, we are often drowned in “hero benchmarks” – carefully curated, vendor-specific tests designed to make a product look like it’s breaking the laws of physics. However, MLPerf is different. It is the industry standard, providing a level playing field where hardware is tested against real-world AI workloads like Large Language Models (LLMs), image generation, and recommendation engines.

MLPerf matters because it removes the “marketing fluff.” For IT decision-makers and cloud providers who are spending billions on infrastructure, MLPerf is the survival guide. It measures not just raw speed, but efficiency and scalability. AMD’s recent results, particularly with the Instinct MI325X accelerators, demonstrate that they aren’t just participating in the AI race anymore; they are now setting the pace in key metrics like Llama-3 performance and latency.

The NVIDIA Exposure: A Problem of Listening

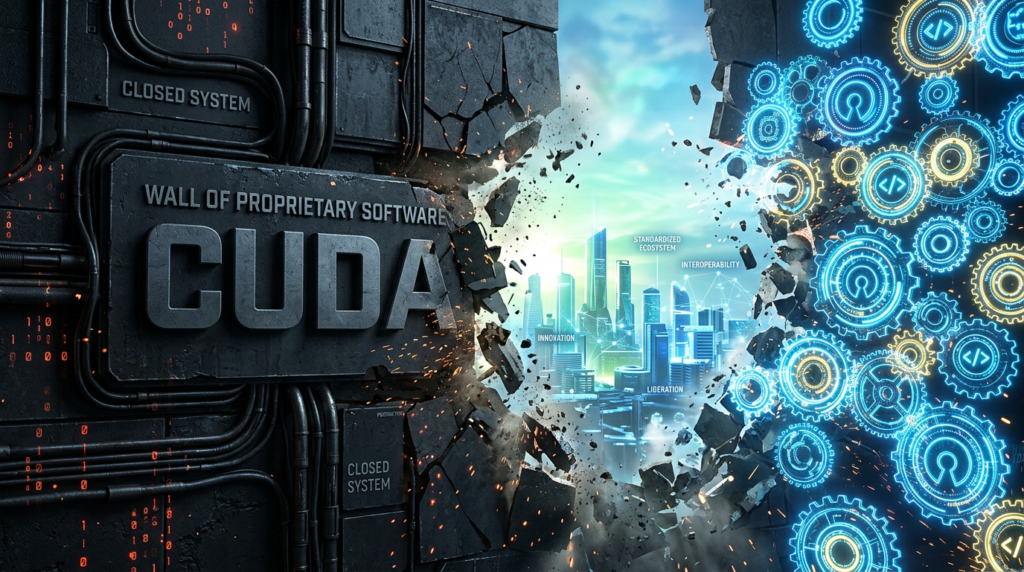

NVIDIA is currently in a position similar to where Intel was in the early 2000s or where IBM was in the late 1980s. When you have 90% market share, you tend to dictate terms rather than negotiate them. I’ve been hearing a growing chorus of complaints from enterprise customers regarding NVIDIA’s proprietary “moat.” Between the high cost of entry, the complexities of the CUDA software stack, and a perceived lack of flexibility in meeting specific customer needs, NVIDIA is increasingly seen as a “tax” on AI progress.

Jensen Huang has done a brilliant job building a powerhouse, but there is a growing sentiment that NVIDIA is focused on its own roadmap at the expense of what the customers are actually asking for: lower TCO (Total Cost of Ownership), open standards, and better availability. By locking customers into a closed ecosystem, NVIDIA has inadvertently turned the industry toward open alternatives.

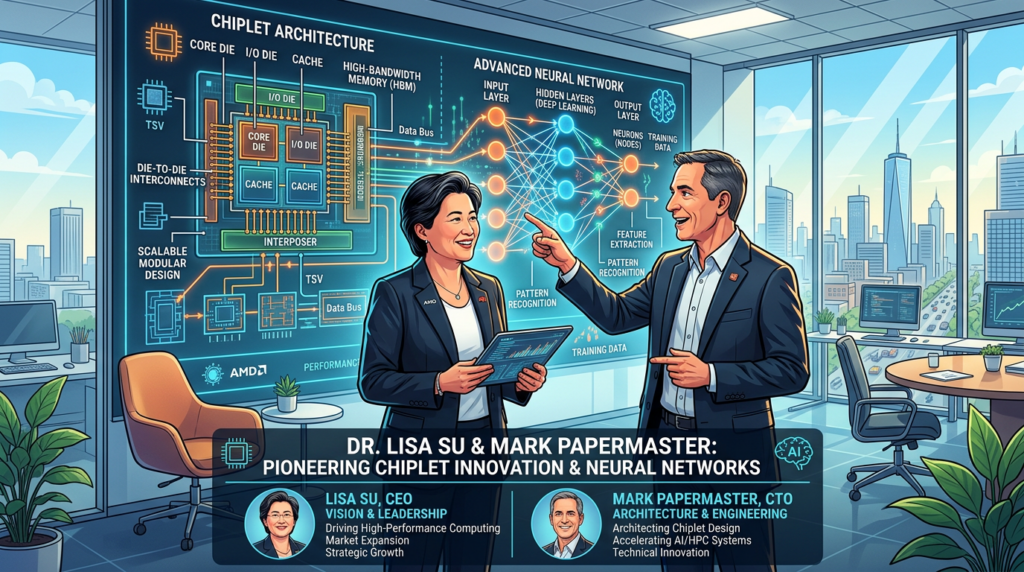

The Renaissance of AMD: Su and Papermaster

To understand why AMD is now the primary threat to NVIDIA, you have to look back at the leadership of Dr. Lisa Su and CTO Mark Papermaster. When Lisa Su took over, AMD was effectively on life support. She made the hard call to pivot away from low-margin markets and double down on high-performance computing.

Mark Papermaster’s architectural leadership cannot be overstated. By focusing on a “chiplet” architecture and a consistent, multi-generational roadmap, AMD was able to out-maneuver Intel in the data center with EPYC. Now, they are applying that same disciplined execution to AI with the ROCm software platform and the Instinct line.

Unlike NVIDIA, AMD has leaned heavily into “open” ecosystems. By making ROCm more accessible and ensuring it plays well with industry-standard frameworks like PyTorch and JAX, AMD is listening to the customers who are tired of being locked into a single vendor’s proprietary silo. AMD is winning because they are acting like a partner, while NVIDIA is acting like a sovereign.

AMD’s AI Performance: Closing the Gap

AMD’s performance in MLPerf 6.0 isn’t just an incremental improvement; it’s a breakthrough. The Instinct MI325X is showing remarkable gains in HBM3E memory capacity and bandwidth, which are the primary bottlenecks for modern generative AI. While NVIDIA’s H200 and Blackwell chips are impressive, the AMD MI325X is delivering comparable, and in some cases superior, inference performance for the latest Llama-3 models.

This is critical because the AI market is shifting from training to inference. While training large models takes massive power, the long-term revenue in AI is in running those models (inference). If AMD can provide a more cost-effective, open, and equally powerful inference engine, the economic argument for staying with NVIDIA begins to crumble.

The Changing AI Landscape of 2026

This year has marked a transition from “AI Hype” to “AI Reality.” In 2024 and 2025, companies were buying every GPU they could find, regardless of price or fit. In 2026, we are seeing the “Great Rationalization.” CFOs are now asking for ROI. They are looking at the power bills for these massive clusters and demanding better efficiency.

Over the rest of the year, we expect to see a surge in “Edge AI” and localized LLMs. The market is moving away from massive, monolithic models toward specialized, efficient ones. This plays directly into AMD’s strengths in versatile, high-memory hardware. As enterprises realize they don’t need a massive NVIDIA cluster to run a specialized internal model, AMD’s value proposition becomes undeniable.

The Competitive Pivot

NVIDIA’s primary defense has always been CUDA. However, the industry is moving toward “software-defined hardware.” Frameworks like OpenAI’s Triton and the growth of the Unified Accelerator Foundation (UXL) are effectively neutralizing the CUDA advantage. Once the software barrier is gone, the competition comes down to hardware performance, power efficiency, and price—areas where AMD has historically excelled.

Wrapping Up

The MLPerf 6.0 results are a “shot across the bow” for NVIDIA. They confirm that AMD, under the steady hand of Lisa Su and the technical brilliance of Mark Papermaster, has reached performance parity in the most important AI workloads.

NVIDIA remains a formidable opponent, but its lack of focus on customer flexibility and its insistence on a closed ecosystem is creating a vacuum that AMD is more than happy to fill. For the first time in the AI era, there is a legitimate choice. And as the market shifts toward inference and cost-efficiency, that choice is increasingly looking like AMD.

In this industry, you either listen to your customers or you watch them leave. AMD is listening. NVIDIA, it seems, is still too busy listening to its own hype.